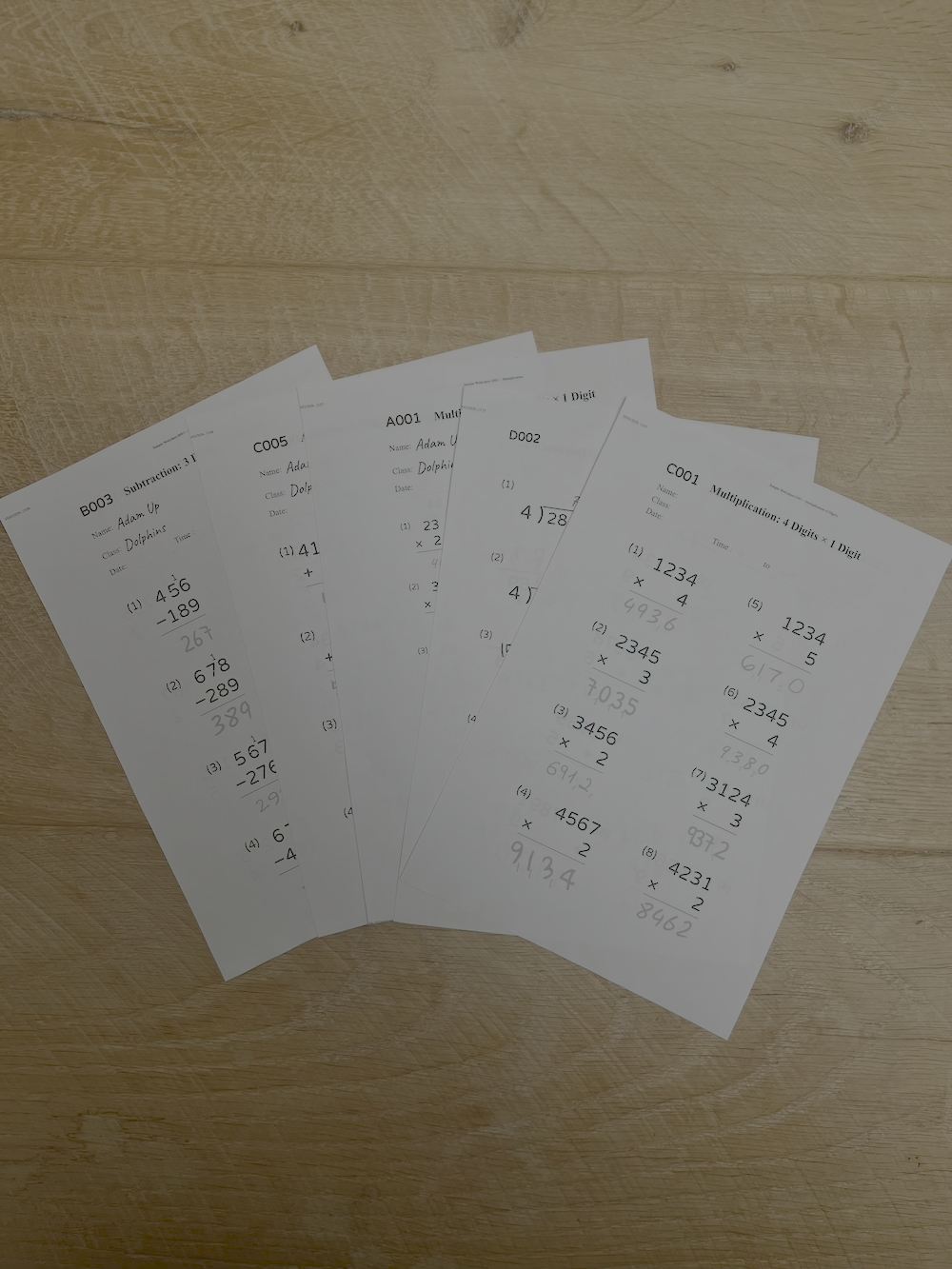

Five years ago, the idea of pointing your phone at a page of messy long division and getting back an accurate, question-by-question mark scheme felt like a tech demo, not a tool. Handwriting recognition existed, but it was built for adult print under good lighting. Children's homework squeezed into tiny squares, with crossings-out, doodles and scribbles, carry marks, thinking written in the margins, pencil smudges, and the occasional juice stain, was another problem entirely.

Today, that has changed. Vision models have become dramatically better at messy handwriting, and the apps wrapped around them have stopped pretending a worksheet is a receipt. The result: 40 answers marked in seconds rather than 40 minutes. Teachers and tutors who would never install a generic "AI tutor" in their classroom or practice happily use a marking app, because the job it does is specific, observable, and reliably gives back four to six hours a week.

What actually changed

Three things came together and none of them was a single magic model release:

- General-purpose vision models learned to read pages, not just words. The same models that can describe a photograph can also reliably work out where question numbers, workings and final answers live on a page, even when the page wasn't designed for machines to read.

-

Handwriting datasets grew up. Reading a single digit was never the whole problem; the hard part was inferring what the child meant in context. Larger, more diverse

training data means a

4that looks a bit like a9gets the right benefit of the doubt when the digits around it and the expected answer make the intent obvious. - On-device preprocessing caught up. Phones with powerful cameras today can deskew, enhance, and segment a photo of a worksheet in a few hundred milliseconds, before the image ever reaches a server. That matters: the best OCR in the world cannot recover from a blurry, skewed photo taken in a warm kitchen at 9:30 pm.

None of these things on their own were dramatic. Together, they crossed a usefulness threshold. An app that gets 97–99% of primary-school maths answers right, consistently, in under 30 seconds per worksheet, solves a problem real people have in classrooms, tutoring sessions, and kitchens every single day.

Each maths booklet has around 40 questions. At 35 seconds a question, marking takes over 20 minutes per child, per day. Reducing that to under five minutes per sheet buys back the equivalent of a full working day each month.

How modern maths OCR works

It helps to think of a good maths-marking app not as "one AI model" but as a short pipeline, where each stage has a specific job:

1. Detecting questions

Before anything can be "read", the app has to work out what counts as a question on the page. That's a layout problem: finding numbered blocks, associating them with the right workings and final answer, and ignoring decorative elements, page headers and margin doodles.

Modern object-detection models, fine-tuned on real worksheets rather than clean PDFs, handle this surprisingly well. The big leap recently has been robustness: the technology no longer struggles when a question wraps across two columns, or when the photo is taken at a slight angle or in low light.

2. Reading handwriting

Once questions are isolated, each answer is recognised in context. The key is that the app already knows what the expected answer should look like and it has seen the printed question. That turns an open-ended handwriting problem into a constrained one: the model can express not just "what did the child write?" but "how confident am I, and how close is it to the expected form?"

That's why a scrawled 56 with a slightly weird top on the 5 still

gets marked correct for 7 × 8. What the model already expects from the question, combined with the

shape of what it sees, is enough to resolve the ambiguity. And when it isn't,

a good tool will tell you, not quietly guess.

3. Validating answers

Reading the handwriting is only half the job. The other half is deciding

whether the answer is right. For arithmetic, that's trivial. For

fractions, units and word problems it's more interesting: 0.5,

½, and 1/2 should all be treated as equivalent for a

Early primary fractions question, while 5 cm and 50 mm might

both be correct depending on the prompt.

The best tools today do this comparison symbolically rather than by string match. They also handle partial credit thoughtfully, e.g. wrong operation but right digits” vs “right idea, wrong label, flagging a method mistake versus a transcription slip. This matters a lot when the goal is supporting learning, not just ticking boxes.

Key takeaways

- Maths OCR is a short pipeline, not a single model: detect → read → validate.

- Context (the expected answer) is what turns messy handwriting into reliable marks.

- Good tools treat equivalent answers equivalently and surface uncertainty rather than hiding it.

Where it still struggles

It's easy to overclaim, so here's the honest picture of what modern maths OCR still gets wrong:

- Very young handwriting. Ages 4–6 are the hardest: digits vary in size and orientation. Expect to review flagged answers rather than trusting blindly.

- Heavy workings with no clear final answer. If a child shows every step of long division but doesn't circle or underline the result, some tools pick the wrong number as "the answer".

- Poor photos. Extreme glare, deep shadows across the page, poor lighting or a phone held at 45° and the app may misread even when the handwriting is fine.

- Non-standard notation. Different schools use different conventions for remainders, improper fractions, or decimal vs comma separators. Tools that let teachers and tutors configure these once, per class or cohort, give much better results than tools that force a single convention globally.

The right mental model is this: maths OCR today is a very competent marking assistant, not an infallible marker. The win is not that the tool is always right: it's that the exceptions become the only thing a human has to look at.

See it on your own worksheets

redmarker is free to download with 10 worksheet credits every month.

What to look for in a marking tool

If you're evaluating an OCR-based marking app as a teacher, tutor, or parent, whether that's a classroom, a tuition business, or your own family, the questions below tend to separate serious tools from novelties:

- Does it work with the worksheets you already use? Good tools don't force you to print their templates. They should read standard drill and booklet formats from the major maths programmes.

- Can you correct a result in seconds? The point of marking is feedback. If fixing a misread takes three taps, you'll use it. If it takes ten, you won't.

- Is there per-student and per-class progress tracking? Marking is most valuable when the data it produces tells you what to revisit next week, not just how someone did yesterday.

- How is children's data treated? Worksheets contain identifiable information. Look for clear, minimal data retention, transparent processing, and the option to remove data on request.

- What happens when it's wrong? Serious tools expose their confidence and let you override. Tools that pretend to be certain are the ones that create trust problems the first time they get something wrong.

The bottom line

The quiet story of edtech today is not the headline-grabbing chatbots. It's the unglamorous, specific tools that solve one problem very well. OCR-based maths marking is one of the clearest examples: a discrete, measurable task that used to eat hours every week for teachers, tutors, and parents, now handled in the time it takes to make a cup of tea.

The technology isn't magic, and it isn't perfect. But it's well past the point where it's useful every single day. And for anyone who spends their evenings with a pile of worksheets and a red pen (class teacher, private tutor, or parent), that's the metric that actually matters.